Environmental Shifts for AI in Finance [Future Finance at Bae, Kim & Lee Pacific]

Summary

- Financial supervisory authorities said they announced the direction for revising AI guidelines for the financial sector, targeting revision toward the end of 2025.

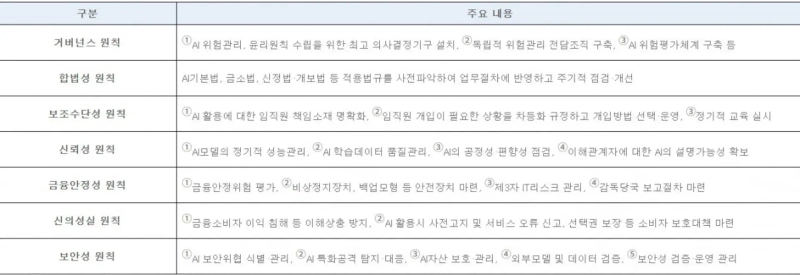

- They noted that the new draft guidelines present seven operating principles and require financial institutions to establish a dedicated AI risk management organization and build a risk assessment framework.

- They said that as AI has become a prerequisite rather than an option in the financial industry, they hope domestic financial institutions will use these AI guidelines as a springboard to lead new financial standards.

Forecast Trend Report by Period

The era of a 'great transformation' for AI in finance arrives

Must consider issues around compliance with guidelines

Key issues in the financial sector's 'AI guidelines'

A new era of sweeping AI transformation is arriving in finance as well. Moving beyond simple task automation, AI is penetrating every corner of financial services—from chatbot services that take over customer-center functions to more advanced credit scoring and all the way to transaction monitoring for anomaly detection. However, finance is an industry whose essence is rooted in 'trust' and 'stability.' Risks stemming from the opacity or bias of AI algorithms can spill over beyond individual financial institutions to the national financial system as a whole. Taking these issues into account, financial supervisory authorities announced the direction for revising AI guidelines for the financial sector toward the end of 2025.

The financial supervisory authorities had already established and announced the first AI operations guidelines for the financial sector in July 2021, and subsequently presented rules that financial institutions must comply with in using AI by releasing the Guide to AI Development and Utilization in Finance (August 2022) and the AI Security Guidelines for the Financial Sector (April 2023). With the explosive rise in the use of generative AI and changes in the technology and regulatory environment—such as the enactment of the Framework Act on Artificial Intelligence—they have now set out new guidelines reflecting these developments.

The existing AI operations guidelines were written around what measures financial institutions should take at each stage of work—governance building; planning and design, development, evaluation and verification, and adoption, operation, and monitoring of AI systems. The newly announced draft guidelines, however, set out seven overarching operating principles: the governance principle, legality principle, auxiliary-means principle, reliability principle, financial stability principle, good-faith principle, and security principle.

Need to move toward 'accountable AI'

Under the revamped guidelines, financial institutions appear to need, above all, to work to implement accountable AI. More specifically, under the 'governance principle,' financial institutions are required to establish a top decision-making body for AI risk management—such as an AI ethics committee—and to set up an independent dedicated organization for AI risk management to control and manage risks across AI-related work overall. In particular, they are required to build a comprehensive framework for assessing AI risks and to establish and implement all necessary procedures for differentiated controls and management by risk level.

In addition, under the auxiliary-means principle, AI should in principle be used as a supporting tool, and the ultimate responsibility for AI outputs must be borne by the institution’s executives and employees. Depending on task criticality and risk level, it is also necessary to define roles and responsibilities at each decision-making stage.

These measures seem reasonable in that they enable financial institutions to manage, in an overarching manner, risks associated with the use of AI. That said, ongoing consideration will be needed as to how decision-making bodies and dedicated organizations for AI risk management should divide roles with existing units already in place, such as compliance and risk departments.

For example, under the legality principle, when developing and using AI, institutions are required to identify in advance applicable laws and regulations by sector—including the Financial Consumer Protection Act—reflect them in internal policies and business procedures, and conduct periodic checks and improvements. The management and oversight of such requirements could overlap with existing responsibilities of compliance monitoring departments that oversee compliance across the institution and financial consumer protection departments that handle matters related to protecting financial consumers.

In practice, extensive discussion will also be needed on how to classify risk ratings for AI systems and on how to further advance controls aligned with risk levels.

AI has become a prerequisite for survival, not an option, across all industries. With this revision to the guidelines as a turning point, the hope is that domestic financial institutions will lead new financial standards for the AI era.

Bae, Kim & Lee LLC’s Future Finance Strategy Center (Head: Senior Advisor Han Jun-seong) was launched in May 2024 and is building an elite lineup of experts across finance and IT—including virtual assets, electronic finance, regulatory response, and information security—in step with accelerating digital innovation in the financial sector and advances in financial technology.

Korea Economic Daily

hankyung@bloomingbit.ioThe Korea Economic Daily Global is a digital media where latest news on Korean companies, industries, and financial markets.