Nvidia’s ‘monster AI chip’ heart to rely only on Samsung and SK…Micron, once in pursuit, drops out

Summary

- It reported that Samsung Electronics and SK hynix were selected as suppliers of HBM4 for Nvidia’s Vera Rubin, while Micron was excluded.

- It said Samsung Electronics has effectively cleared Nvidia’s HBM4 quality tests and begun shipping some volumes, putting it in a favorable position to maintain HBM dominance.

- It reported that rising commodity D-RAM prices are forcing a profitability trade-off between HBM4 and commodity D-RAM, enabling Samsung to present Nvidia with various negotiation cards.

Forecast Trend Report by Period

Next-generation AI accelerator ‘Vera Rubin’ selects HBM4 suppliers

K-semiconductors seize the initiative in the ‘HBM war’

Samsung gains the upper hand, ships early volumes

SK hynix in final-stage optimization

As early as this month, HBM4 ‘simultaneous mass production’

D-RAM prices rising to HBM3E levels emerge as a variable

“Samsung likely to present a range of negotiation cards”

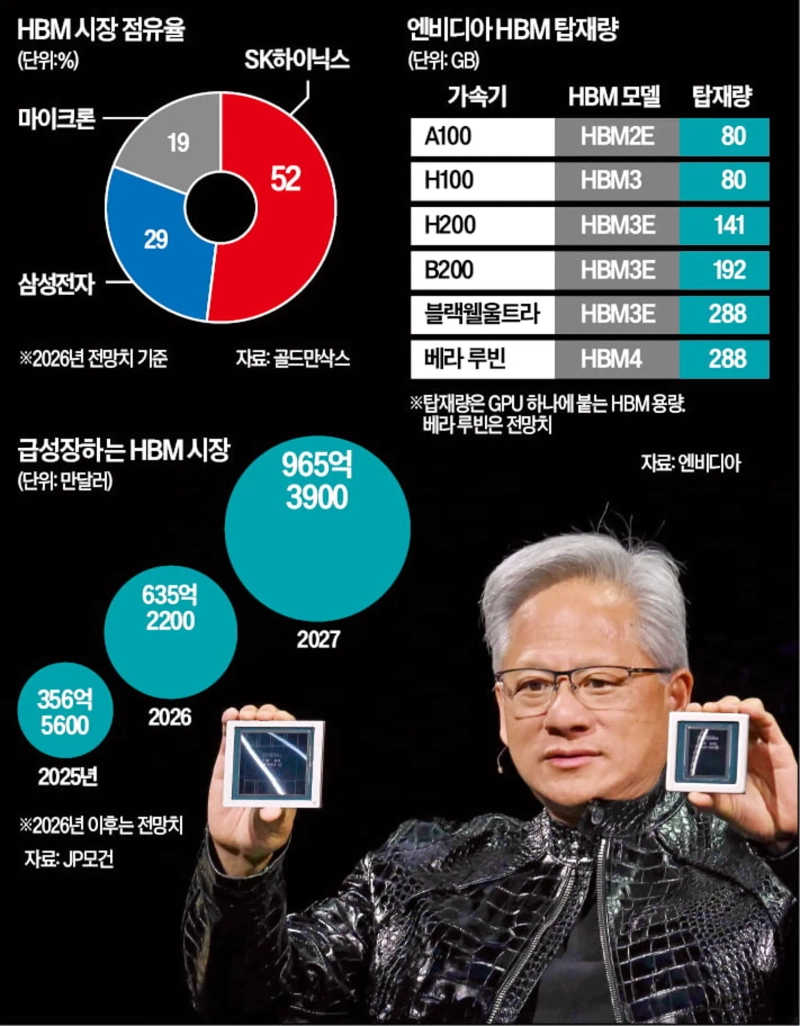

Since around 2022, when the era of artificial intelligence (AI) began in earnest, the company that has effectively determined the fortunes of memory chipmakers has been Nvidia. Those that made it into Nvidia’s high-bandwidth memory (HBM) supply chain became leading players in the AI industry; those that did not saw their standing slip. Samsung Electronics was one such case. The crisis narrative that plagued Samsung’s semiconductor business for two years was sparked by delays in supplying HBM3 (4th-generation HBM) to Nvidia, and it ebbed after Samsung passed Nvidia’s 12-high quality test for HBM3E (5th-generation HBM) in September last year.

From this perspective, the fact that Samsung Electronics and SK hynix are included as HBM4 (6th-generation HBM) suppliers for Nvidia’s Vera Rubin while Micron is excluded is seen as far from insignificant. It is because the two Korean firms could secure the ability to deliver in volume to Nvidia over the next one to two years, helping them defend their dominance in HBM.

◇ Orders for high-performance HBM4

According to the semiconductor industry on the 8th, a physical unit of Vera Rubin will be unveiled for the first time at Nvidia’s developer conference, “GTC 2026,” to be held on the 16th in Silicon Valley, the US. The official launch date has not been set, but it is said to be slated for the second half of this year. Nvidia is staking everything on boosting Vera Rubin’s performance to more than five times the current level to overwhelm rivals such as AMD and Broadcom. More than 80 partner companies worldwide are working in lockstep with Nvidia to support the launch of the “monster AI accelerator.”

Nvidia has, since last year, singled out HBM4 as a key component to underpin Vera Rubin’s success and urged memory makers to develop high-performance products. For Vera Rubin’s HBM4 operating speed, it has demanded “10Gb or higher,” well above the 8Gb per second set by the semiconductor standards body JEDEC. Capacity has also been increased. Vera Rubin will use 16 stacks of HBM4, totaling 576GB. This is larger than the HBM4 capacity (432GB) of AMD’s next-generation AI accelerator, the MI450.

◇ Micron falls out for Vera Rubin

Global memory makers have gone all-in on the battle to supply HBM4 for Vera Rubin. Winning Nvidia—currently commanding over 80% share in the AI accelerator market where HBM is installed in large quantities—would validate technological prowess while also promising strong earnings.

The winners in the race to supply HBM4 for Vera Rubin have narrowed to Samsung Electronics and SK hynix. Samsung Electronics and SK hynix were listed among the component suppliers, while Micron was not. An industry official said, “Micron is not being mentioned as an HBM4 supplier for Vera Rubin.”

Of the two, Samsung Electronics has recently pulled ahead. Samsung has effectively passed Nvidia’s HBM4 quality tests, which are being run in two tracks for products operating at “10Gb per second” and “11Gb per second.” Last month, it also began shipping finished products to Nvidia, though volumes were not large. SK hynix is also working with Nvidia on product optimization to clear the 11Gb test. Given that it takes more than six months from loading D-RAM wafers for HBM4 to packaging, the two companies are expected to start producing HBM4 as early as this month.

That does not mean Micron will not supply HBM4 at all. It is more likely to be used not for the top-tier AI accelerator Vera Rubin, but for midrange products in the Rubin series.

◇ Rising commodity D-RAM prices emerge as a variable

Allocated volumes and pricing for HBM4 for Vera Rubin have not yet been determined. Some in the industry say that while SK hynix will take more than half of Nvidia’s total HBM volume this year, including HBM3E, Samsung Electronics could become the largest supplier when it comes only to HBM4 for Vera Rubin. Samsung recently expressed strong confidence, saying, “This year’s HBM sales will triple from last year.”

A key variable is the price of commodity D-RAM, which is doubling quarter-on-quarter. The per-Gb price of server commodity D-RAM modules such as “SOCAMM2” is said to have risen to $1.3, approaching the level of HBM3E, HBM’s flagship product. For Samsung, producing more commodity D-RAM could be more advantageous for profitability than HBM4, which requires additional costly processes such as stacking D-RAM.

Analysts also view Nvidia CEO Jensen Huang’s meeting with SK hynix engineers in Silicon Valley to urge HBM4 development as an effort to check Samsung’s strengthening bargaining power. An industry official said, “Samsung Electronics can hold HBM4 and commodity D-RAM as leverage and present Nvidia with a range of negotiation cards.”

By Hwang Jung-soo / Kim Chae-yeon, hjs@hankyung.com

Korea Economic Daily

hankyung@bloomingbit.ioThe Korea Economic Daily Global is a digital media where latest news on Korean companies, industries, and financial markets.